Data centres form the core of the globally interconnected infrastructure we call the ‘cloud’. At its most basic, cloud computing refers to an infrastructural shift from desktop computing (where files and applications were stored on the local hard drives of our computers) to a form of online computing (where these are stored in data centres accessed remotely ‘as a service’ through the Internet). Despite the image of fluffy ethereality the cloud metaphor conjures, the creeping ubiquity of cloud computing is underpinned by an expansive digital-industrial infrastructure that is aggressively expanding across the surface of the planet.

Architectural Computing

As a building type, the data centre first emerged during the dot-com bubble between 1995 and 2001. Businesses had become dependent on their IT systems. Growing awareness of the potential disruption that would follow an IT-related disaster meant servers (pizza-box shaped computers) were quickly moved from ‘the cupboard under the stairs’ and installed in dedicated large-scale facilities. Providing a centralized space for the sole purpose of data storage, the data centre was the architectural solution to mitigating the twin risks of downtime and data loss. These buildings aimed to ensure that business-critical IT systems could recover quickly in the event of failure, with minimal disruption to service.

As non-stop computing became a standard business requirement, data centres became permanent fixtures on the industrial landscape. In the early days of the Internet, businesses rented space in data centres and filled it with their IT equipment. Unlike in-house office-based computing, data centres provided physical security, a diversity of network connectivity and round-the-clock maintenance. This service model for delivering computation, where multiple clients shared data centre space, was known as ‘colocation’ (‘colo’ for short) and formed the foundation for the industrialization of computing that would become the cloud.

The infrastructural lineage of the data centre can be found in the monolithic architectures of the telecommunications-industrial complex. Many of these early ‘colo’ facilities were palimpsestically housed in the carcasses of obsolete communications buildings: telephone switching stations, carrier hotels and early Internet exchange points (IXPs), inheriting the Brutalist architectural aesthetic of these outmoded equipment buildings in the process. The rapid obsolescence of these built spaces – and their repurposed afterlives as data centres – foreshadows the eventual desuetude that awaits the cloud, reminding us of the short lifespan of technological advancements and of the wastes and excesses endemic to endless tech-upgrading. When not retrofitted in abandoned structures, purpose-built data centres at the start of this century were typically large, windowless warehouses that remained relatively blank and featureless, prioritizing functionality over form.

Today, these once mundane service infrastructures have transformed into highly-designed expressive forms. Contrary to claims that data centres strive to remain anonymous and hidden, their images increasingly saturate the mediasphere, reconfiguring their previous anti-monumental status.

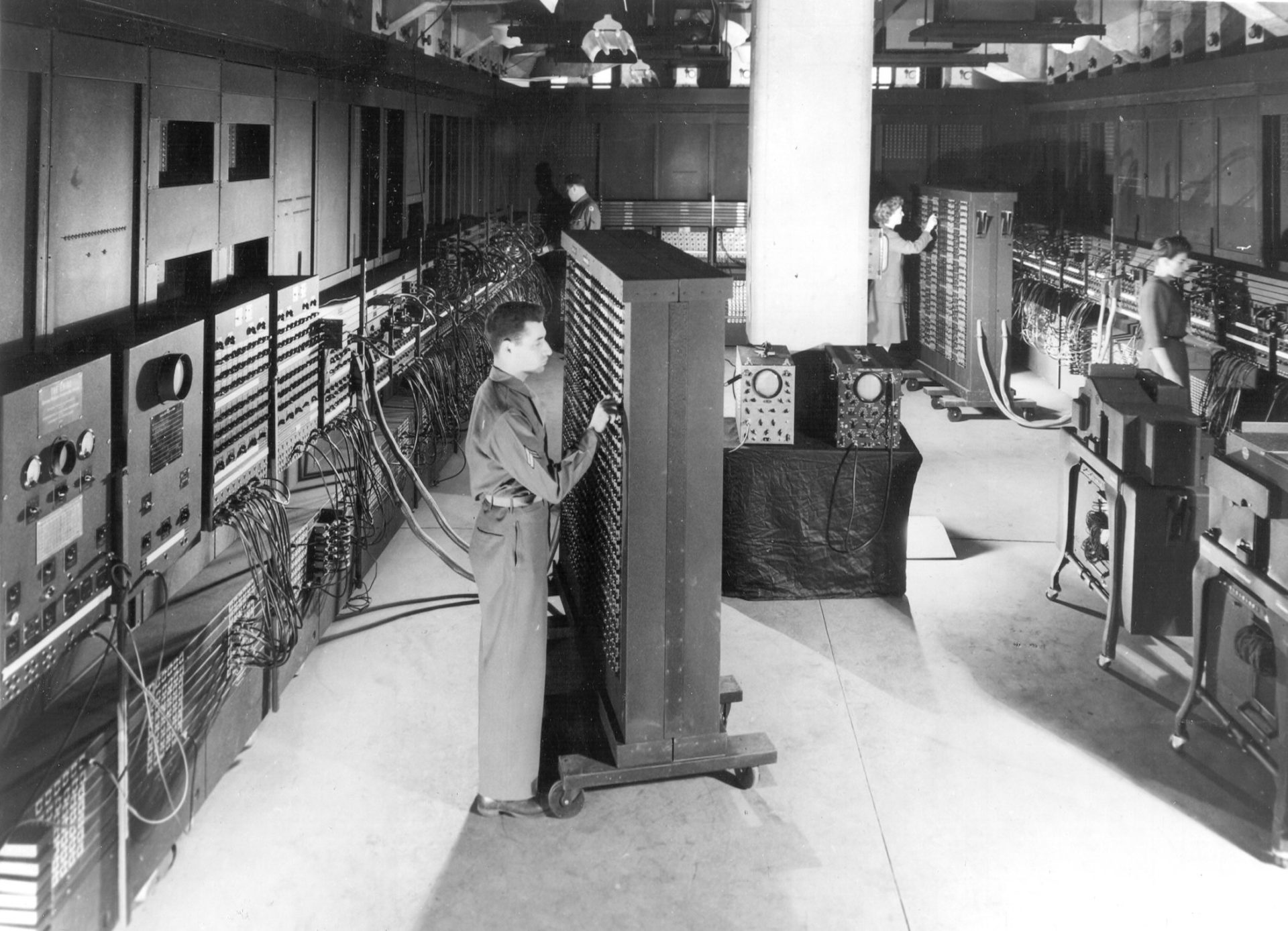

Beyond their razor-wired perimeters and their increasingly stylized but logoless facades, these buildings reveal an almost science fictional technoscape: sterile deserts of startling ‘white space’; corridors canopied with multi-colored cables; aisle after aisle of encaged server cabinets echoing with the metallic whir of hard drives and air conditioning units; important-looking LED serverlights flickering and glowing like the bioluminescent flora of Avatarland. These buildings are essentially architectural computers. In this regard, another important architectural genealogy of the data centre is to be found in the massive mainframe computer systems of the 1940s. The US Army’s Electronic Numerical Integrator and Computer (ENIAC) at the University of Pennsylvania, IBM’s Selective Sequence Electronic Calculator (SSEC) in New York and the code-breaking Colossus computers at Bletchley Park (that helped in the cryptanalysis of the Lorenz cipher), once filled the floor-space of multiple rooms in research facilities. Much like these early computers, data centres are warehouse-scale machines. Rather than running military simulations, however, they manage the data and IT systems that run the world.

Cloud Conquest

Whereas colo facilities provided a real estate space for clients to house their IT equipment, the cloud removes the need for clients to physically own equipment. The cloud enables virtually anyone to (virtually) access data centre computing resources – from storage capacity to computing power – in a pay-as-you-go fashion. Underlying this computing paradigm is the reconceptualization of computing as a utility service, which is why the cloud is often referred to as the ‘fifth utility’, alongside electricity, gas, water and telephony.

Over the last two decades, businesses, governments and individuals have turned en masse to the cloud for quick, easy, scalable and on-demand computing resources. Consequently, key sectors of industrialized societies, including those essential to the functioning of today’s data-based economies, energy and communications systems, and those vital to national security, are increasingly mediated on many levels by data centres. These buildings have come to deliver not only the digital products that we typically think of as ‘cloud services’ like Netflix, Adobe Cloud, Gmail, Spotify and social media, but are increasingly responsible for storing and distributing the data, apps and IT systems that form the operational backbone of digital societies. As expectations for uninterrupted uptime become ever more inflexible, growing numbers of increasingly large data centres are being built to eliminate the possibility of IT failure.

Under economy of scale logics, globally-distributed backup facilities are being constructed to enable a new form of constant data centre uptime known as ‘Continuous Availability’. In the data centre industry, availability is measured and materialised through redundancy. Geo-replicating or ‘mirroring’ data in multiple locations provides greater redundancy against local-level outages (due to fires, flooding, power loss, system failure, security breaches, etc.). If for any reason an organization’s primary data centre should experience an outage it will automatically ‘failover’ to the multiple backup data centres located outside the disaster region, theoretically without any sign that a disaster has even occurred at the user-end. Idling in a state of always-on readiness, these backup facilities permanently wait on standby in case of an emergency situation. Haunted by the imagination of disaster and guided by logics of redundancy, resilience and contingency, the infrastructural excess of this extensive failover architecture has culminated in what geographer Stephen Graham has called ‘a whole incipient geography of back-up and repair spread across the world’.

Cloud Myths

A 2014 forecast by the International Data Corporation (IDC), predicted that by 2017 there would be roughly 8.6 million data centres in the world. This figure included everything from office-based IT closets to hyper-scale facilities, but IDC noted a trend of enormous ‘mega data centre’ expansion. While the global count of data centres may begin to decrease, the total worldwide data centre space is thus predicted to increase, from 1.58 billion square feet in 2013 to 1.94 billion square feet in 2018. One of the great myths of cloud storage is its space-saving potential. While living room shelf-space has been liberated from the weight of DVDs, CDs, books and video games, this ‘weight’ has not disappeared. Rather, it has simply been relocated into these expansive data warehouses.

Another myth of the digital age is that of the ‘environmentally-friendly Internet’. Many data centres use as much electricity as a small town, and can cost around $4million a year for their operators in electricity alone, usually generated from non-renewable energy sources. A considerable portion of this electricity is used simply to keep redundant equipment idling on standby in case of a failure scenario. Corporations, banks and governments extol the virtues of going ‘paperless’ and increasingly encourage their customers to switch to online-based communications as an ‘eco-friendly’ alternative to the paper and petrol costs of snail mail. But this green rhetoric omits the excessive energy consumption and environmental destruction that these data centre-delivered digital services rely upon. For this reason, a growing number of anti-cloud activists have begun to raise awareness of the unimagined environmental and geopolitical realities of digital-industrial expansionism, arguing that the metaphorical conceit of the cloud strategically misleads the public by rhetorically erasing any sense of infrastructural physicality. Needless to say, all of our online activity – Google searching, Netflix streaming, endless tweeting, running WhatsApp, storing files in iCloud – uses up space and energy in data centres. Perhaps the greatest trick tech companies ever pulled was convincing the world that their data doesn’t exist, in physical form, at least.

Consuming Computing

The cloud, then, is an extremely expensive and energy-intensive enterprise, not only because of the large capital outlays involved in constructing data centres but also because of the costs required to constantly power these places and their multiple failover sites. Because of the huge expenses involved, these buildings are only financially viable if they are switched on and in use all the time. Businesses, governments and individuals alike must therefore all be persuaded to mass-migrate to cloud-based data delivery and consumption models to ensure the profitability of this infrastructural excess. A parallel with the early electricity industry is illuminating in this regard (pun kind of intended). When Thomas Edison created the lightbulb, he was faced with the problem that consumers only ever needed to use electricity at night. But running electricity generator plants was incredibly expensive and only financially viable if the public could be persuaded to purchase and use electrical goods throughout the day. By producing and extensively marketing such goods, a lifestyle was thus created based around the constant consumption of electricity and connection to the electric grid.

Similarly, the cloud is not just infrastructure, it is also a lifestyle, sold to us and defined by ideologies of seamless continuity and constant connectivity. A proliferating array of cloud applications, ‘smart’ things and ‘all you can eat’ data plans thus incite continuous cloud-connection, across devices, across cities and across users’ daily lives. No matter what activity we are doing there is now a cloud service or device designed to ensure that we can remain online while doing it (and ensure the continued extraction of data from increasingly intimate and previously inaccessible areas of everyday life). Powerful new ideologies of cloud connectivity, like the smart city, the Internet-of-Things and Apple’s Continuity, offer visions of a digital future defined by vast cyber-physical ecologies of data circulation where citizens and objects need never be offline or disconnected.

In much the same way that hostile architecture design aims to control how public spaces are used, we are thus finding an increasingly infrastructured hostility toward offline practices, with a growing number of civic services being built around the technopolitical push to the cloud and organised around normative assumptions of smartphone ownership or social media usage. Government services are being replaced by apps while urban transport systems increasingly attempt to route the public through the cloud by making contactless travel cheaper than the price of paper tickets (thus enabling transport organisations to accumulate valuable data on peoples’ journey routes, distances and times). For governments, corporations, city planners and policy makers, cloud computing promises more efficiency, transparency and productivity through the exhaustive accumulation of data. The (mis)alignment between environmentalism and cloud services begins with the elimination of paper tickets and ends with the cashless economy.

The infrastructural excess of multi-data centre redundancy does therefore not simply ‘respond’ to an increasing demand for cloud consumption, but also actively participates in producing this demand. Media theorist Mél Hogan has suggested that data centres therefore need to be reconceptualized less as data storage sites (responding to a supposed ‘data deluge’) and more as sites for generating data surplus.

The Bearable Lightness of Laptops

Nevertheless, it’s thanks to data centres that our digital devices are so light and portable. With more of our files and applications being stored in and streamed from data centres, the bulky storage drives, SD card slots and other expandable memory ports that once weighed down our devices are being stripped away and replaced with minimal capacity internal storage. As computing becomes a utility, laptops no longer have CD or DVD drives because we download our apps, programs and software online, directly from data centres. With most of our computing needs now implemented as web services, the main task left for our devices, as powerful as they are, is more and more just to act as portals to data centres.

But this lightness comes at a significant cost. Removing ports removes the possibility to increase memory with external hardware, and shrinking internal storage capacity means that users increasingly have little choice but to store their data in the cloud. And taking these devices off-cloud is increasingly difficult and often made deliberately unclear by tech manufacturers, with devices silently uploading files and images to cloud services without any fanfare. While the cloud promises new kinds of user freedom in the form of ‘automatic’, ‘unlimited’ and ‘effortless’ computation, it becomes increasingly difficult for users to operate off-cloud. With cloud storage now the default storage option on the majority of digital devices being developed – typically in the form of Apple iCloud, Google Drive, Dropbox or Microsoft OneDrive – we are all slowly but surely being herded onto the cloud.

With the cloud being increasingly lifestyled and infrastructured into a range of everyday social and economic practices and processes, data centres continue to grow in size. Far from a massive database in the sky, it is the planet’s surface and our everyday lives that are gradually being colonised by the cloud.

Alexander Taylor is also one of the guests on the Failed Architecture Podcast #01: Data Space.